Real-world testing alone cannot provide sufficient coverage for safety-critical automotive AI systems

● Extremely rare occurrence of critical failure scenarios

● Years of driving required to expose corner cases

● High cost of data collection and manual annotation

● Inability to safely reproduce hazardous situations

● Limited ground truth availability for precise benchmarking

Detecting failures in complex systems is extremely difficult in real-world environments. Critical failure conditions are rare, unpredictable, and often unsafe or prohibitively expensive to reproduce.

Edge cases, such as extreme weather, sensor malfunctions, unexpected user behaviour, or unusual system interactions, may occur only once in thousands or millions of operating hours. As a result, organisations lack sufficient data to test, validate, and harden their systems before failures happen in production.

Relying solely on real-world data leaves blind spots, increases risk, and limits the ability to proactively identify vulnerabilities before they cause downtime, safety issues, or financial loss.

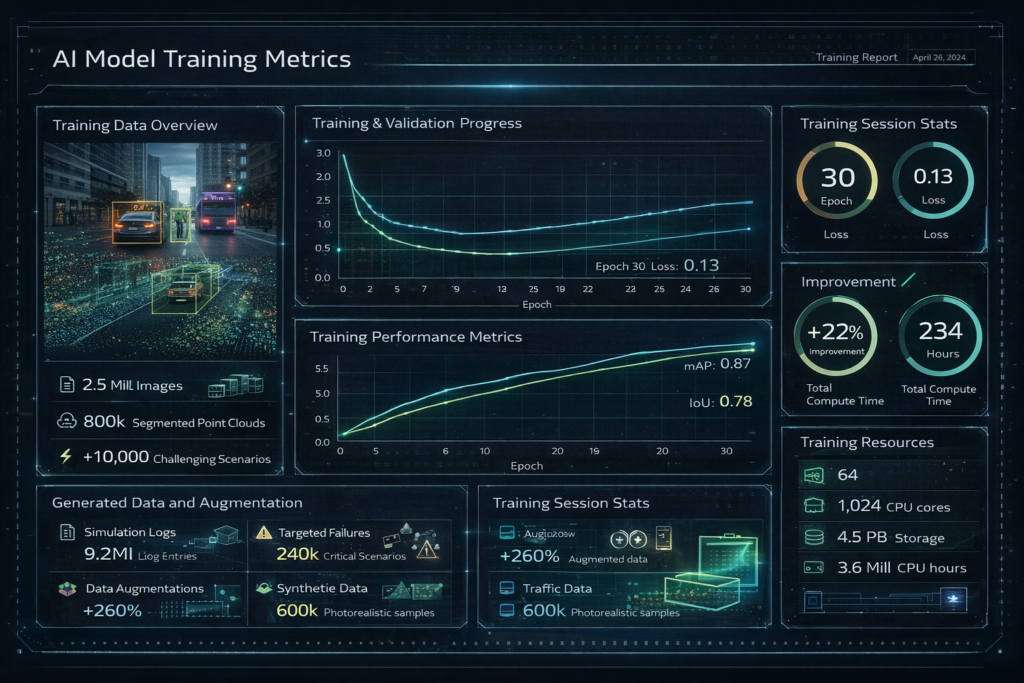

Our toolchain provides a closed-loop AI validation and data generation framework that enables

● Large-scale testing of AI models under diverse and adverse conditions

● Objective benchmarking using accurate ground truth

● Early detection of failure modes

● Automated generation of targeted synthetic data for retraining